Haptic API suite - developer documentation

- Getting started

- Compiling the Haptic API suite

- Content of the suite

- Chai 3D - minimal

- Chai 3D - high-level

- HDAL - minimal

- Metuunt

- JTouchToolkit - minimal

- libnifalcon - minimal CLI

- Chai 3D - haptic benchmark

- HAPI - minimal

- H3DAPI - highlevel

- CHAI 3D

- Installation prerequisites

- Disclaimer

back to Haptic API suite

Getting started

Haptic API suite source code is available via the public Subversion repository at svn://cgg.mff.cuni.cz/HapticApi. Please read the user documentation before you read this.

Compiling the Haptic API suite

The source code solution is available for the Microsoft Visual Studio 2008.

As a first step, checkout the last version of Haptic API suite source code.

Go to the trunk\src\HapticApi directory and open the HapticApi.sln.

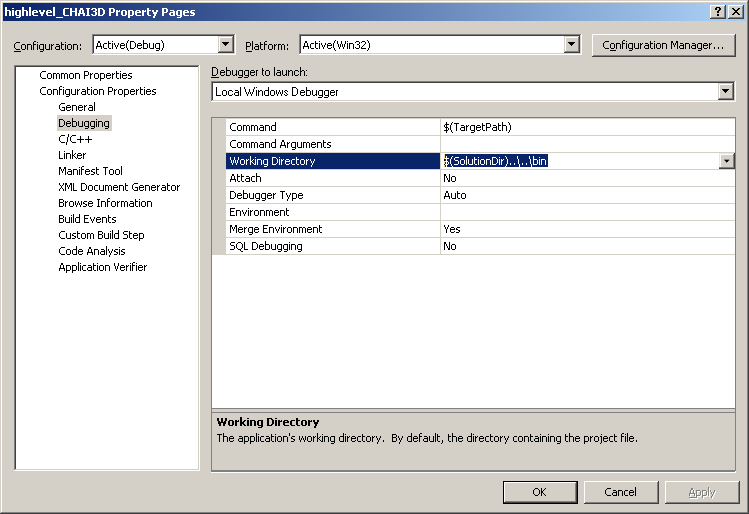

Because the working directory settings is saved in the user specific project files, you have to edit and rename the provided user project file (e.g. chai3d_high-level.vcproj.Mus-PC.Mus.user)

or just change the setting of the project -> configuration properties -> Debugging -> Working directory.

Set the Working directory to $(SolutionDir)..\..\bin

Content of the suite

The Haptic API suite tries to cover the most APIs usable with the Novint Falcon device.

Low-level APIs such as HDAL SDK and libnifalcon supports just the Novint Falcon device. High-level APIs supports various devices from Sensable, Force Dimension or HapticMaster.

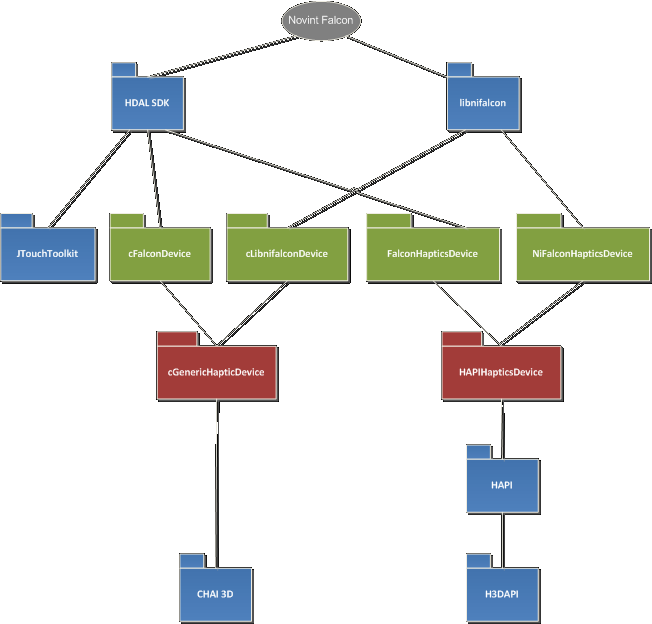

The Schema 01 below shows system architecture layers starting from the Novint Falcon device at the top and ending with the scenegraph high-level APIs at the bottom.

Blue boxes represents APIs you can use in your application, green boxes are classes wrapping the driver or low-level API and red boxes represents abstract device class that you can use to communicate with different numerous haptic devices within the bigger software development kit or application programming interface.

Schema 1 - Haptic API suite system architecture layers

Testing applications

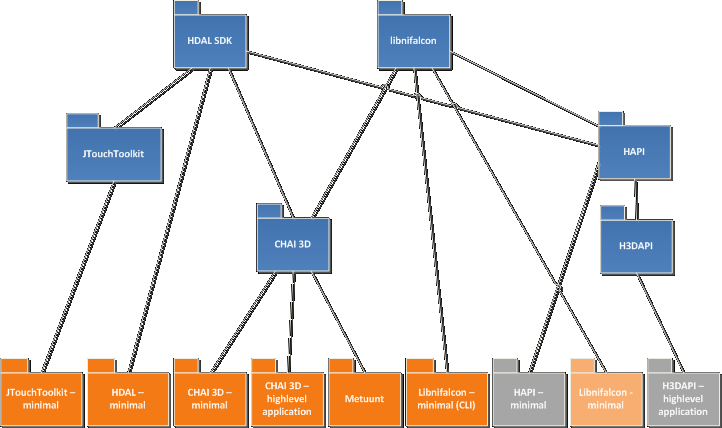

As a part of the Haptic API survey, Haptic API suite presents wide platform and programming language scale of haptic APIs primarily intended for a Novint Falcon device.

Blue boxes in the Schema 2 below represents used APIs like the first schema.

Orange boxes represents availabe testing applications that are linked to the specific API.

Grey boxes were applications that were still in development and are now available in the the Haptic API suite.

Schema 2 - Haptic API survey testing applications

Project CHAI3D - minimal

This project is a minimal project type which shows capabilities of API (in this case low-level acces to the haptic device) in a really minimalistic way.

My goal was to show a programmer how easy it is to develop an application with the support of the haptic device using particular API.

Every API in the Haptic API suite has its own minimal project. Project CHAI3D - minimal focuses on API CHAI 3D which is known as a scene graph API.

The scene graph provides not only communication with the haptic device but it can also provide specific ways to render graphics and haptics.

In this particular minimal project is the use of the scene graph ommited.

The reason is very simple, CHAI 3D provides a support for many available haptic devices on the market in a very simple way (as shown in the source code below) so it may be prefferable to use CHAI 3D compared to complicated manufacturer SDK.

As of the time writing this document, there's a support for Force Dimension, Novint Technologies, MPB Technologies and Sensable Technologies devices.

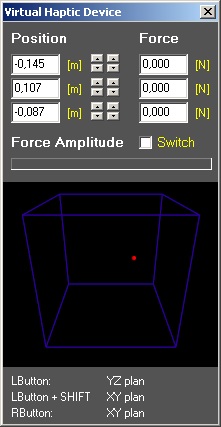

There's also a virtual haptic device available for Microsoft Windows that is using the mouse to change position of the virtual device.

When you hold the left-mouse button you're changing the position in YZ-plane and when you hold right-mouse button or left-mouse button + SHIFT key you're moving in XY-plane.

You can see the force sent to the device by your application as the status bar.

In this particular minimal project is the use of the scene graph ommited.

The reason is very simple, CHAI 3D provides a support for many available haptic devices on the market in a very simple way (as shown in the source code below) so it may be prefferable to use CHAI 3D compared to complicated manufacturer SDK.

As of the time writing this document, there's a support for Force Dimension, Novint Technologies, MPB Technologies and Sensable Technologies devices.

There's also a virtual haptic device available for Microsoft Windows that is using the mouse to change position of the virtual device.

When you hold the left-mouse button you're changing the position in YZ-plane and when you hold right-mouse button or left-mouse button + SHIFT key you're moving in XY-plane.

You can see the force sent to the device by your application as the status bar.

The way CHAI 3D provides the communication with the haptic device may seem astonishingly easy and powerful for unexperienced haptic device programmers.

In fact it really is. All you need to work with the haptic device are two vectors: position and force.

The position vector is actually a point in the workspace.

The force vector sets the direction of the force you feel with haptic device and the size of the force vector is the size of force you feel. It's easy as that.

What is even better is that you don't have to bother whether you have connected two or three haptic devices to your computer.

A cHapticDeviceHandler class will let you know how many devices CHAI 3D recognized in your system by simply invoking a method getNumDevices() that returns integer.

When you decide to work with the first (index 0) device in your computer, you just initialize the cGenericHapticDevice class with the handler.

cGenericHapticDevice is the abstract class that every haptic device implementation has to inherit in order to work with CHAI 3D.

This class contains method open() and initialize() that saves hundred lines of code comparing to use of a specific device driver.

You can also fetch the information about the device, for example the name of the manufacturer, device name, maximal force size, workspace radius of the device in meters, etc.

There's a structure called cHapticDeviceInfo and method getSpecifications() that lets you do that.

As this project aims to be really minimalistic, it does not used any separate haptic thread (which CHAI 3D supports too) and it simply runs infinite loop which ends on haptic button press.

CHAI 3D provides classes to work with three-dimensional vectors cVector3d and many math functions.

In order to get the position of the haptic device it simply calls a method getPosition(cVector3d& a_position) and pass the reference to the position vector.

Last piece of code detects user switches (haptic button press) and checks whether the position hasn't changed so that it doesn't have to print the coords again.

This example doesn't show the way how to send forces to devices.

You can do that by simply calling the method setForce(cVector3d& a_force) within the cGenericHapticDevice instance with appropriate force vector.

I recommend the CHAI 3D examples page for more sophisticated examples.

Project CHAI3D - minimal source code:

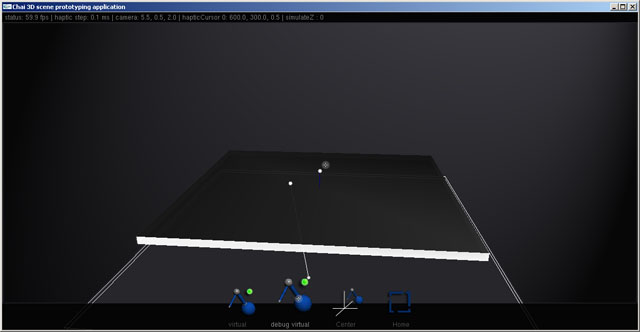

Project CHAI3D - high-level

This project aims to show possibilites of CHAI 3D scene graph API.

Its main purpose is to use the functionality CHAI 3D offers to developer.

To satisfy this request the application wraps the basic scene graph functionality and provides graphical user interface.

CHAI 3D high-level testing application became a sort of 3D haptic prototyping application during the process of development.

All the source code convention and aspects are hopefully CHAI 3D compatible (no doxygen here).

CHAI 3D provides OpenGL graphics rendering but it won't open any windows or process input devices (mouse, keyboard, etc.).

CHAI 3D high-level application uses one of the simplest library called OpenGL Utility Toolkit (GLUT).

GLUT manages the cross-platform window creation, input device processing and rendering frame.

It is a C library and all updates (render, input) are managed via C function pointer callbacks that have to be global or static.

The file system.cpp contains all system calls to and from GLUT such as updateGraphics, updateMouse and even UpdateHaptics function.

CHAI 3D itself contains support of threads (Win32 CreateThread method and POSIX thread).

You can create a new cross-platform thread this way:

cThread* hapticsThread = new cThread();

hapticsThread->set(updateHaptics, CHAI_THREAD_PRIORITY_HAPTICS);

CHAI 3D does not implement any way of cross-platform control over the common use of resources. If you have no intention to use threads in a more complex way you can get along with CHAI 3D implementation. That is the reason why the CHAI 3D high-level application uses a C++ boost library that contains the mutex algorithm implementation. Initialization of a separate haptic thread example:

//

// Initializes haptic thread

//

void initHaptic()

{

//cThread* hapticsThread = new cThread();

//hapticsThread->set(updateHaptics, CHAI_THREAD_PRIORITY_HAPTICS);

boost::thread hapticsThread(&updateHaptics);

}

Use of the boost scoped mutex lock in a camera set method example:

void cScene::setSpectatorCamera( unsigned int a_hapticDeviceIndex )

{

boost::mutex::scoped_lock lock(m_lock);

if (m_cameraSet != NULL) m_cameraSpectator->m_cameraPosition = m_cameraSet->m_cameraPosition;

m_cameraSpectator->setHapticDeviceIndex(a_hapticDeviceIndex);

m_cameraSet = m_cameraSpectator;

}

CHAI 3D high-level application uses a main thread (graphics rendering, application logic computing) and a special haptic thread.

The main reason for creating an extra thread is a sample rate of the haptic device.

Novint Falcon programmer's guide states that you have approximately a millisecond to calculate the forces to achieve a credible haptic effect.

The time spent on a haptic calculations is called a haptic step in the CHAI 3D high-level application and is shown in the status bar at the top of the screen.

Haptic device use and modify resources that a main thread is working with.

Adding a new mesh into the scene within a haptic thread without locking the main thread can cause many miscalculations and the application may start to behave unstable.

The boost::mutex::scoped_lock locks the variable m_lock for the whole scope of a code for all threads.

If any other thread tries to lock the m_lock for itself it has to wait for the other thread to unlock this variable.

The simplest spinlock algorithm is sufficient enough because there are just two threads in the application and the operation takes reasonable time to complete.

CHAI 3D API offers a very good integration of physics engine ODE (Open Dynamics Engine) that is used in CHAI 3D high-level application.

Part of the project was an implementation of a cross-platform Novint Falcon driver - libnifalcon (more on that here)

and integration of cross-platform PNG library libpng support into the CHAI 3D.

All the external libraries are precompiled and included in the directory /trunk/ext/ of the Subversion repository .

CHAI 3D high-level application is divided into five main classes.

The most important class cScene defines a whole scene, i.e. the world of objects, camera, movement, haptics, ...

On one hand you split functionality into several classes but on the other hand there's often a need of communication between them.

This very complex communication dependency makes the source code unmanageable.

One solution is to create a singleton class that will create a communication interface.

This solution, however, has its negative impact on the code consistency because you can access the scene from everywhere.

It is then up to the programmer to use the singleton interface carefully and only when really neeeded.

Another singleton is a cConfig class.

It provides access to the configuration such as window width, height or current mouse position.

The cHaptic class provides methods to initialize haptic devices,

update haptic devices data and haptic tools as well as methods to obtain specific information about the haptic device.

The very specific aspect of the CHAI 3D high-level application is that you can use more haptic tools at once.

For this reason there's a std::vector of tools included in this class.

cHaptic class also manages the 2D user interface cursors to control the hud menu at the bottom.

Part of the project was also integration of a new virtual debugging haptic device into the CHAI 3D because the original virtual haptic device works only on Microsoft Windows platform.

This device class is called cDebugDevice and as the generic haptic device provides methods to get position and set force,

the cDebugDevice provides setPosition and getForce methods to control the haptic device.

The update method of the cHaptic class contains a code to update the cDebugDevice with a mouse by casting a generic device to cDebugDevice.

// debug device control by mouse

cDebugDevice* d1 = dynamic_cast<cDebugDevice*>(hapticDevice);

if (d1)

{

// ...

d1->setPosition(newPosition);

if (g_config.m_mouseButtonDown)

{

d1->setUserSwitch(0, true);

}

else

{

d1->setUserSwitch(0, false);

}

}

This allows the CHAI 3D high-level application to use the mouse and work only with the haptic devices.

Fourth class is the cHud class that provides the interactive menu interface at the bottom of the screen.

Every state of the menu is defined by sHud structure that contains the name of the menu, menu items and CHAI 3D generic object.

Clicking the hud invokes the onClick event and the processHudAction takes the place with the arguments of hud type,

action (specified by the clicked item or empty if no item was clicked), item index and haptic index

which is fundamental to the interface itself because sometimes you need to know which haptic device you have to use for certain activities (e.g. fly-by spectator camera).

The cCameraSet abstract class defines the interface for camera interaction.

Every camera type has to inherit the cCameraSet to work properly.

The update method takes only one attribute - stepTime - which defines the time between the last frame rendered and current time.

//---------------------------------------------------------------------------

// cCameraSet abstract class

//---------------------------------------------------------------------------

class cCameraSet

{

public:

cVector3d m_cameraPosition;

cVector3d m_cameraTarget;

cVector3d m_cameraUpVector;

virtual void update(double a_stepTime) = 0;

private:

};

This text should have helped you understand the basics of CHAI 3D high-level application source code, main classes and communication between them.

It is not, by far, exhausting documentation so please feel free to contact me whenever you face the problem either compiling the application or comprehending the code.

Please see the source code in the repository.

Project HDAL - minimal

Project HDAL - minimal falls within the minimal set of applications.

It uses the proprietary driver and SDK from the Novint Falcon manufacturer called Haptic Device Abstraction Layer (HDAL) - Novint HDAL SDK 2.1.3.

It does not treat situation of missing Novint Falcon driver in the system and the application may throw an access violation error.

The purpose of this application is to show the way of accessing and sending data using the HDAL SDK.

I strongly recommend reading the HDAL Programmer's Guide (PDF) which is a part of the SDK that you can download here.

It is definitely the best guide through the SDK and gives even the general information about haptic devices.

After the successful initialization the access to the device data is divided into two seperate callbacks.

The first one is a non-blocking callback NonBlockingServoOpCallback which grabs the data from the device and saves them into double positionServo[3].

You can start this callback by calling the SDK function hdlCreateServoOp.

/** Schedule an operation (callback) to run in the servo loop.

** Operation is either blocking (client waits until completion)

** or non-blocking (client continues execution).

**

** @param[in] pServoOp Pointer to servo operation function

** @param[in] pParam Pointer to data for servo operation function

** @param[in] bBlocking Flag to indicate whether servo loop blocks

** @return Handle to servo operation entry

** @par

** Errors: None

** @par

** @see hdlDestroyServoOp, hdlInitNamedDevice, hdlInitIndexedDevice

*/

HDLAPI HDLOpHandle HDLAPIENTRY hdlCreateServoOp(

HDLServoOp pServoOp, /* function pointer callback */

void* pParam, /* parameter to callback */

bool bBlocking /* is callback blocking/non-blocking */

);

The second callback is a blocking callback as set in the hdlCreateServoOp function parameter bBlocking.

This callback prevents rewriting the data in the middle of the haptic update process as seen in the BlockingServoOpCallback function.

Please see the project HDAL - minimal source code below with comments.

Project JTouchToolkit - minimal

JTouchToolkit API does seem to be a dead project but it still provides quite a good way to develop Java application that uses the haptic device.

JTouchToolkit is currently supporting Novint Falcon and OpenHaptics devices.

Please see the JTouchToolki website for updates.

Java platform may be operating system independent but the support of the Novint Falcon is strictly Microsoft Windows dependent because it wraps the Novint HDAL driver.

If you wish to use Novint Falcon on a Linux with the Java programming language, see the libnifalcon website.

The libnifalcon Java minimal application is intended to be in a next major release of the Haptic API suite.

There's jtouchtoolkit directory in trunk/src/HapticApi/jtouchtoolkit/ of a repository containing the javadoc and a java archive JTouchToolkit_2.0_beta.jar file.

You can compile project JTouchToolkit - minimal in a Microsoft Visual Studio with a custom tool using a javac.exe that has to be set in the PATH environment variable.

However, for better debugging and other tools you can use the provided eclipse project files.

The trunk/src/HapticApi/jtouchtoolkit/bin directory contains libraries JHDAL.dll, JHDAPI.dll and JHLAPI.dll needed to run the application.

JTouchToolkit has come with a method of adding haptic listneres very similar to HDAL callbacks without a need of setting blocking or non-blocking access.

By inheriting (implementing) HapticListener and overriding appropriate methods you get a class that can operate with the device.

Initialization of the device is done by a static function FalconDevice.newFalconDevice specifying the index of the device.

You can count the number of devices with a static function FalconDevice.countDevices().

Please see the source code below:

Haptic listener

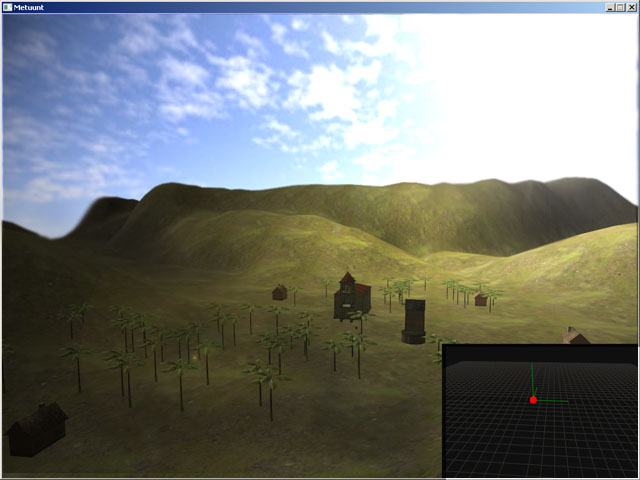

Project Metunt

The project Metuunt intended to be a basic 3D engine for MMORTS game.

It was a semester project for basic programming course (more info here).

The documentation for this project is available only in Czech here.

The goal of this project was to implement haptic support into an existing project that had no plans to include such a support at a time of development.

A brief overview:

Metuunt is divided into several classes providing special functionality, i.e.

loading 3DS model file format (C3ds.h),

octree frustum culling (COctree.h),

input device processing (CInput.h),

high-precision timer support (CTimer.h),

configuration (CConfig.h),

logging mechanism (CLog.h),

world object management (CObject.h),

graphics management (CGraphics.h)

and the scene containing objects (CScene.h).

The global instance of CSystem class System provides communication interface.

The application uses WINAPI functions to manage window rendering creation and DirectX 9.0c for rendering the scene.

The important file for the Haptic API survey is a CHaptic.h containing CChai class definition.

It manages the initialization, receiving haptic position for the spectator camera and calculation/normalization of global forces.

The basic object sphere collision (this can be tested against the church building) is defined in the CObject class itself.

Providing the three-dimensional vector of a collision tool the CObject::getCollisionForce returns the normalized force.

All forces are sumed up and sent to the device by invoking CChai::SetForce(D3DXVECTOR3 a_force) method.

If the size of force is greater than maximum force allowed for the specified haptic device the update algorithm simply normalizes this force.

// returns collision force vector against sphere

D3DXVECTOR3 CObject::getCollisionForce(D3DXVECTOR3 *a_point)

{

D3DXVECTOR3 returnForce (0, 0, 0);

D3DXVECTOR3 diff (a_point->x - x, a_point->y - y, a_point->z - z);

float diffSize = sqrt(diff.x*diff.x + diff.y*diff.y + diff.z*diff.z);

if (diffSize > collisionSphereRadius) return returnForce;

D3DXVec3Normalize (&returnForce, &diff);

returnForce = (-1 * returnForce);

D3DXVECTOR4* tmp = new D3DXVECTOR4();

D3DXVec3Transform(tmp, &returnForce, &System.Graphics->viewMatrix);

D3DXVECTOR3 tmp3 = *tmp;

D3DXVec3Normalize(&returnForce, &tmp3);

return returnForce;

}

The haptic position and object force viewport in the bottom right of the screen is managed by the method renderHapticViewport that simply

reads position data from the haptic device.

Screenshot of the project Metuunt running on Microsoft Windows.

Please see the source code in the repository.

libnifalcon - minimal CLI

Libnifalcon API comes with a very programming-friendly way how to test your application very fast.

The part of the API is the class (or framework) FalconCLIBase

that manages all the initialization, command line parsing and firmware loading for you.

To use FalconCLIBase just inherit the class and override methods for addOptions and parseOptions.

The runLoop method shows the standard loop method of getting device data and setting the type of libnifalcon kinematics.

Please see the source code below:

CHAI 3D

The primary focus of the Haptic API survey was on the CHAI 3D API.

Some technical difficulties, bugs and missing support were found during the development of testing applications.

For this reason, the CHAI 3D libray source code used by Haptic API suite was changed and edited.

The major upgrade is a libnifalcon support to CHAI 3D that lets you to use Novint Falcon device on a Linux and Mac operating system.

You may find this in /trunk/ext/chai3d/src/devices/CLibnifalconDevice files.

Another upgrade is a support of alpha-channel (8 bits per channel) PNG images loading.

You may find that in /trunk/ext/chai3d/src/files/CFileLoaderPNG.

Also a few bugs were fixed: recursive call of setMaterial, bad user switch behavior on Novint HDAL, ...

Please see the source code in the repository.

HAPI - minimal

HAPI - minimal application is the smallest low-level haptic API application (in a source code length).

The HAPI library contains an AnyHapticsDevice class that initializes any available haptic device by calling initDevice and enableDevice methods.

HAPI::HAPIHapticsDevice::DeviceValues structure is then used to work with the haptics data.

Please read the prerequisites during the installation of the Haptic API suite to run this application correctly!

H3DAPI - highlevel

H3DAPI - highlevel application shows the basic use of X3D and Python interface of H3D API.

The application uses AnyDevice X3D node to work with haptic device and God-object algorithm to render haptic shapes.

The scene contains one animated sphere with frictional surface effect and one mouse proxy sphere.

The haptic tool defined as stylus is also represented as a sphere.

Please read the prerequisites during the installation of the Haptic API suite to run this application correctly!

CHAI 3D - haptic benchmark

CHAI 3D - haptic benchmark is an application developed for a libnifalcon library performance testing.

The application uses the same functionality of CHAI 3D as CHAI 3D - minimal application

and the Boost library is used for the thread handling.

There are three tests in the benchmark.

The first test starts an extra thread for all available devices and measures the frequency of haptic loop for five seconds.

The second test creates a thread for every device.

Every thread wait for synchronization flag called threadHandler1 and then runs the haptic loop with frequency measuring.

The third test measurers the attainable position resolution for every device

by computing the distance in each iteration of the haptic loop that the haptic tool made in the workspace.

The user has to move with the haptic tool adequately to get right results.

Please read the prerequisites during the installation of the Haptic API suite to run this application correctly!

Used API: JTouchToolkit - https://jtouchtoolkit.dev.java.net/

Prerequisites

Novint Falcon drivers

Haptic API suite is primarily focused on Novint Falcon device. You'll not be able to run some test applications if you do not have Novint Falcon drivers.

You can download Novint Falcon drivers here:

https://backup.filesanywhere.com/fs/v.aspx?v=8e6d6b8e5e6473bc9d6b

Compatible tested drivers: setup.Falcon.v3.1.4.a_090407.exe

You can try to download latest Novint Falcon drivers here:

http://home.novint.com/support/download.php

Novint HDAL SDK

To compile a testing application that uses the HDAL you have to download the Novint HDAL SDK. Tested version: Novint HDAL SDK 2.1.3.

You can download Novint HDAL SDK here:

http://home.novint.com/products/sdk.php

Microsoft DirectX

Haptic API suite contains one testing application that uses Microsoft DirectX SDK (August 2009). I haven't included DirectX redistributables into this setup so if you wish to run a testing application follow the link bellow and install the latest End-User Runtime (or SDK if you plan to compile Haptic API suite).

Microsoft DirectX web page here:

http://www.microsoft.com/windows/directx/

Oracle Java

There's a Java testing application in the Haptic API suite and you need to download the latest end-user Java in the link bellow for that application to run.

Oracle Java download here:

http://www.java.com/getjava/

If you plan to compile Java testing application that uses JTouchToolkit you have to download Java Development Kit.

Oracle JDK SE download here:

http://java.sun.com/javase/downloads/index.jsp

Python 2.5

H3D API - highlevel application needs a Python 2.5 installed.

Python 2.5 download here:

http://www.python.org/download/releases/2.5.5/

D2XX drivers

If you do not wish to install Novint Falcon drivers and you just want to try libnifalcon, you should still install D2XX drivers that allow direct access to the USB device through a DLL.

D2XX drivers download here:

http://www.ftdichip.com/Drivers/D2XX.htm

Compatible tested drivers: 2.06.00, 3rd November 2009

System settings

It is recommended to use Chai 3D high-level application with the vertical synchronization (V-Sync) system settings turned on. You can specify that in your graphics card vendor driver controller. V-Sync is turned on as a default setting in most cases so if you haven't changed anything application should run fine.

Disclaimer

The Haptic API suite provided by Petr Kadlecek may be freely distributed, provided that no charge above the cost of distribution is levied, all licenses of subprograms are preserved and that the disclaimer below is always attached to it.

Many testing applications were inspired by examples provided by external API authors.

The Haptic API suite is provided as is without any guarantees or warranty.

Although the author has attempted to find and correct any bugs in the Haptic API suite, the author is not responsible for any damage or losses of any kind caused by the use or misuse of the applications.

The author is under no obligation to provide support, service, corrections, or upgrades to the Haptic API suite.

For more information, please contact the author.

2010 (beta), under heavy construction, Petr Kadlecek